Hi 👋, I am a Research Scientist at Apple MLR. I completed my PhD from the Department of Computer Science at University of Maryland, College Park, where I was advised by Prof. Dinesh Manocha, Distinguished Professor and Paul Chrisman Iribe Chair. My research focuses on advancing multimodal foundation models, with an emphasis on multimodal reasoning, agentic capabilities, fine-grained perception, and robustness across visual, audio, and language modalities.

During my PhD, I worked closely with several industry research groups. I was a Research Intern at Apple Machine Learning Research hosted by Chun-Liang Li and Karren Yang. I also spent the summer of '24 at Meta Reality Labs as a Research Scientist Intern hosted by Ruohan Gao. Previously, I was a Student Researcher at Google Research with Avisek Lahiri and Vivek Kwatra on speech-driven facial synthesis in the Talking Heads team. I also worked with Adobe Research as a PhD Research Intern with Joseph K J on multimodal audio generation. I have also collaborated with Prof. Kristen Grauman, Prof. Salman Khan, and Prof. Mohamed Elhoseiny.

Before starting my PhD, I worked as a Machine Learning Scientist with the Camera and Video AI team at ShareChat, India. I was also a Visiting Researcher at the Computer Vision and Pattern Recognition Unit at Indian Statistical Institute Kolkata under Prof. Ujjwal Bhattacharya. Earlier, I was a Senior Research Engineer with the Vision Intelligence Group at Samsung R&D Institute Bangalore, where I worked on AI-powered perception and vision systems for consumer devices.

I received my MTech in Computer Science & Engineering from IIIT Hyderabad, where I was advised by Prof. C V Jawahar. During my undergraduate studies, I worked as a research intern with Prof. Pabitra Mitra at IIT Kharagpur and at the CVPR Unit at ISI Kolkata.

-

Feel free to reach out if you're interested in research collaboration!

Email /

GitHub /

Google Scholar /

LinkedIn /

Twitter

|

|

Selected publications

I am broadly interested in problems at the intersection of Computer Vision, Computer Audition, and Machine Learning, with the goal of building AI systems that can perceive, reason, and interact with complex real-world environments. My research focuses on multimodal learning (Vision + X), particularly for generative modeling and cross-modal understanding with minimal supervision.

In the past, I have also worked on problems in computational photography, including image reflection removal, intrinsic image decomposition, inverse rendering, and video quality assessment.

Representative papers are highlighted below. For a complete list of publications, please refer to my

Google Scholar.

|

|

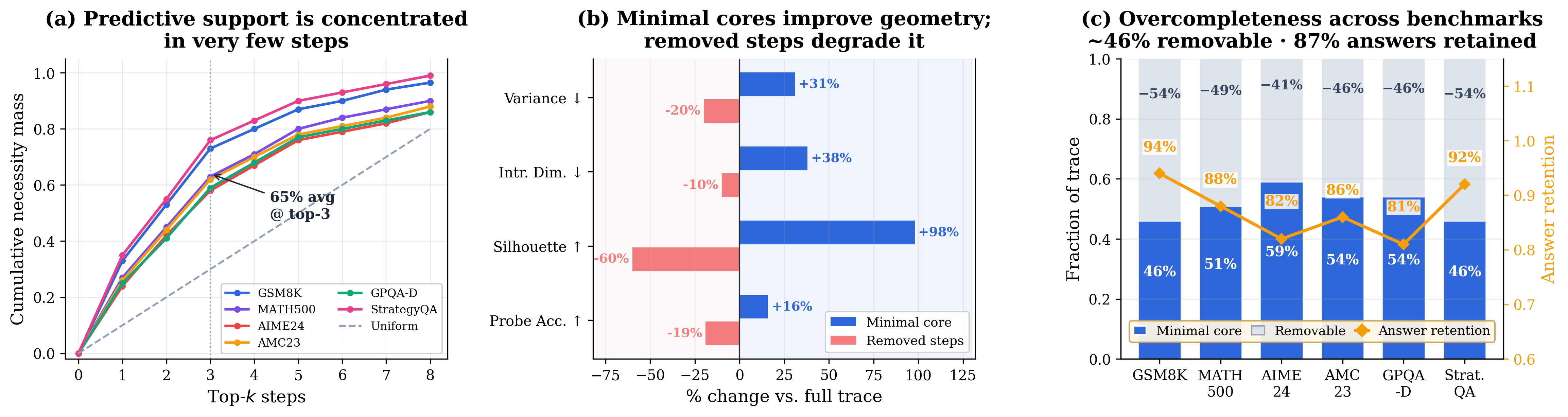

Uncovering the Representation Geometry of Minimal Cores in Overcomplete Reasoning Traces Uncovering the Representation Geometry of Minimal Cores in Overcomplete Reasoning Traces

Sanjoy Chowdhury, Dinesh Manocha

arXiv Preprint, 2026

Paper

|

|

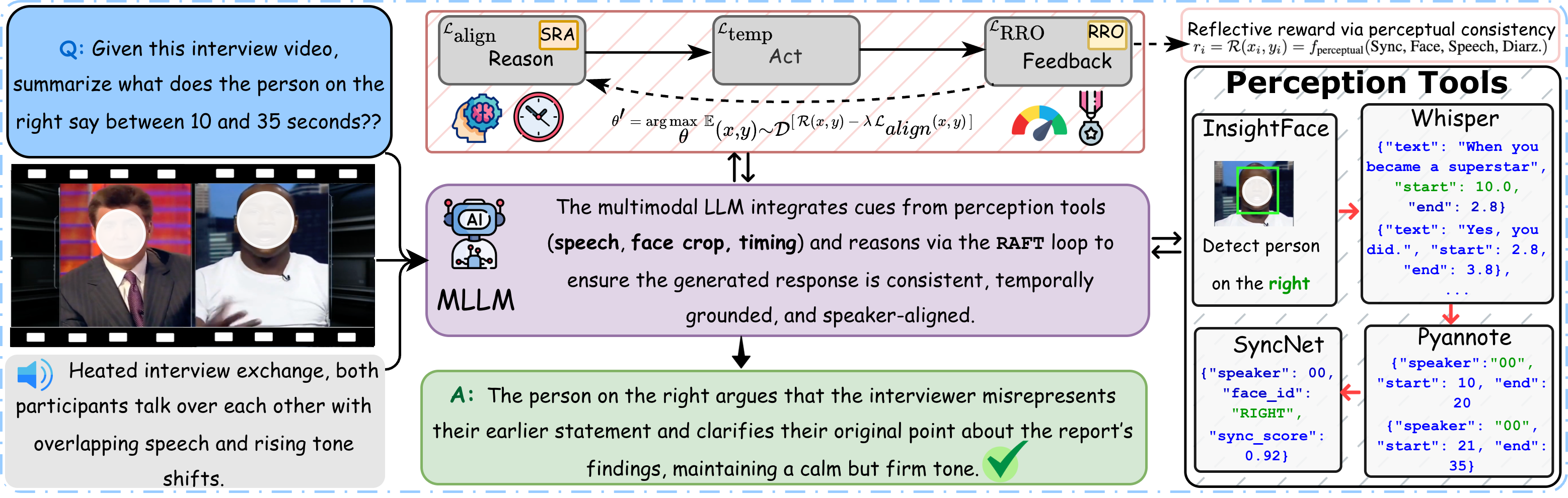

AMusE: Audio-Visual Benchmark and Alignment Framework for Agentic Multi-Speaker Understanding AMusE: Audio-Visual Benchmark and Alignment Framework for Agentic Multi-Speaker Understanding

Sanjoy Chowdhury, Karren D. Yang, Xudong Liu, Fartash Faghri, Pavan Kumar Anasosalu Vasu, Oncel Tuzel, Dinesh Manocha, Chun-Liang Li, Raviteja Vemulapalli

Conference on Computer Vision and Pattern Recognition (CVPR), 2026

Paper /

Project Page (Coming soon) /

|

|

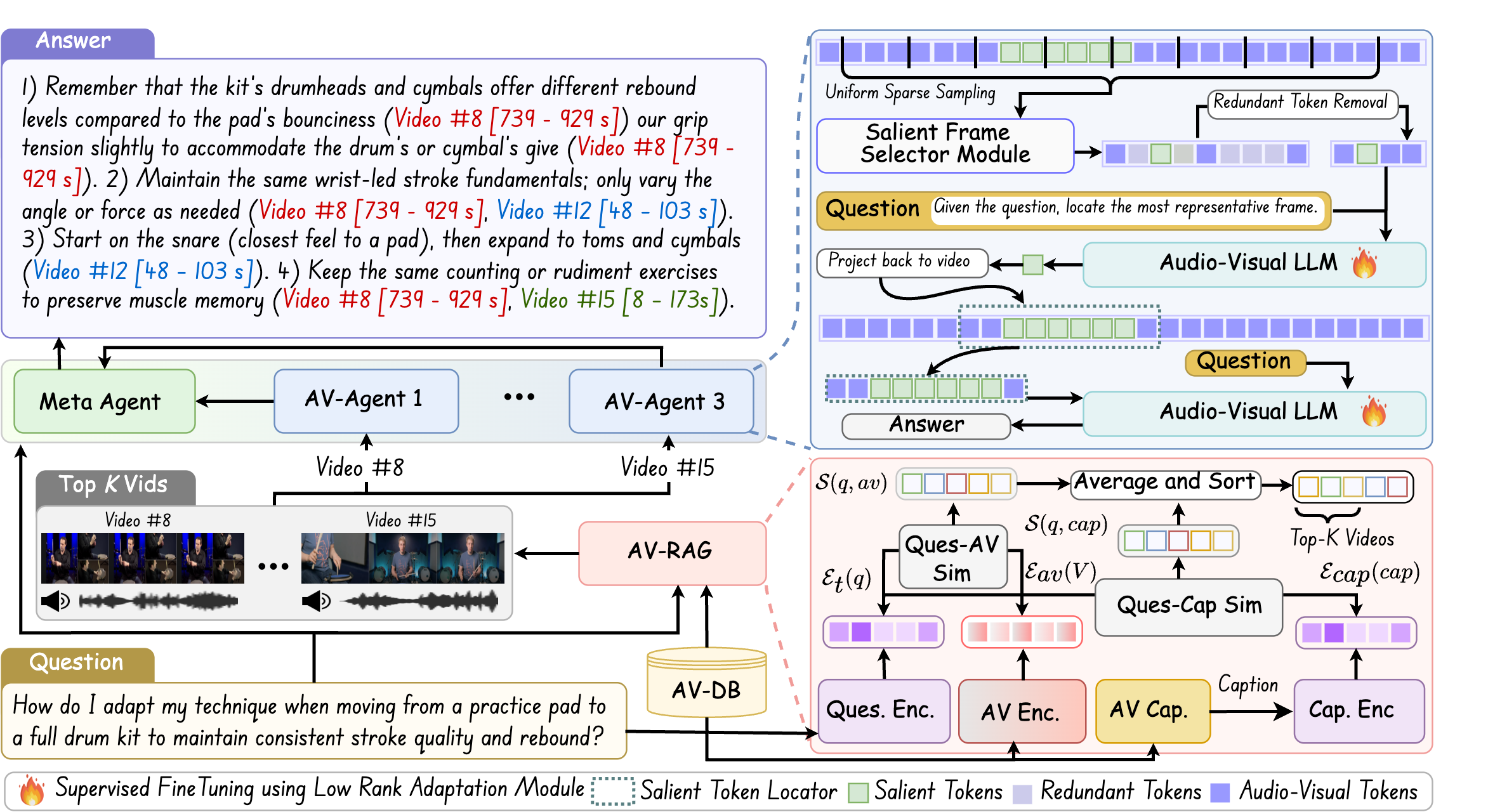

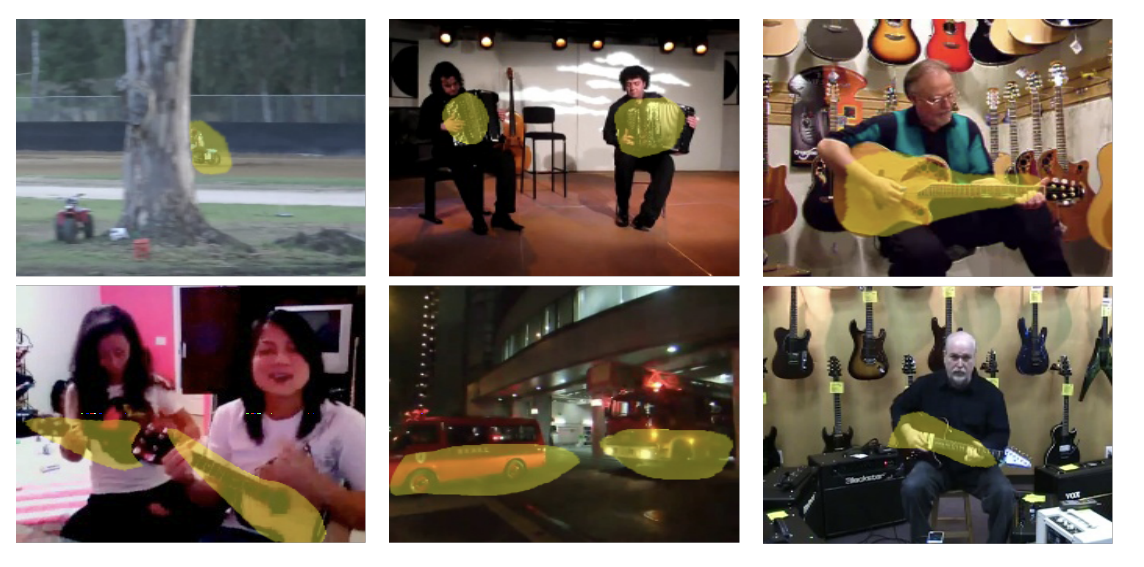

MAGNET: A Multi-agent Framework for Finding Audio-Visual Needles by Reasoning over Multi-Video Haystacks MAGNET: A Multi-agent Framework for Finding Audio-Visual Needles by Reasoning over Multi-Video Haystacks

Sanjoy Chowdhury, Mohamed Elmoghany, Yohan Abeysinghe, Junjie Fei, Sayan Nag, Salman Khan, Mohamed Elhoseiny, Dinesh Manocha

Annual Conference on Neural Information Processing Systems (NeurIPS), 2025

Paper /

Project Page /

Poster /

Huggingface /

Kaggle /

Code

|

|

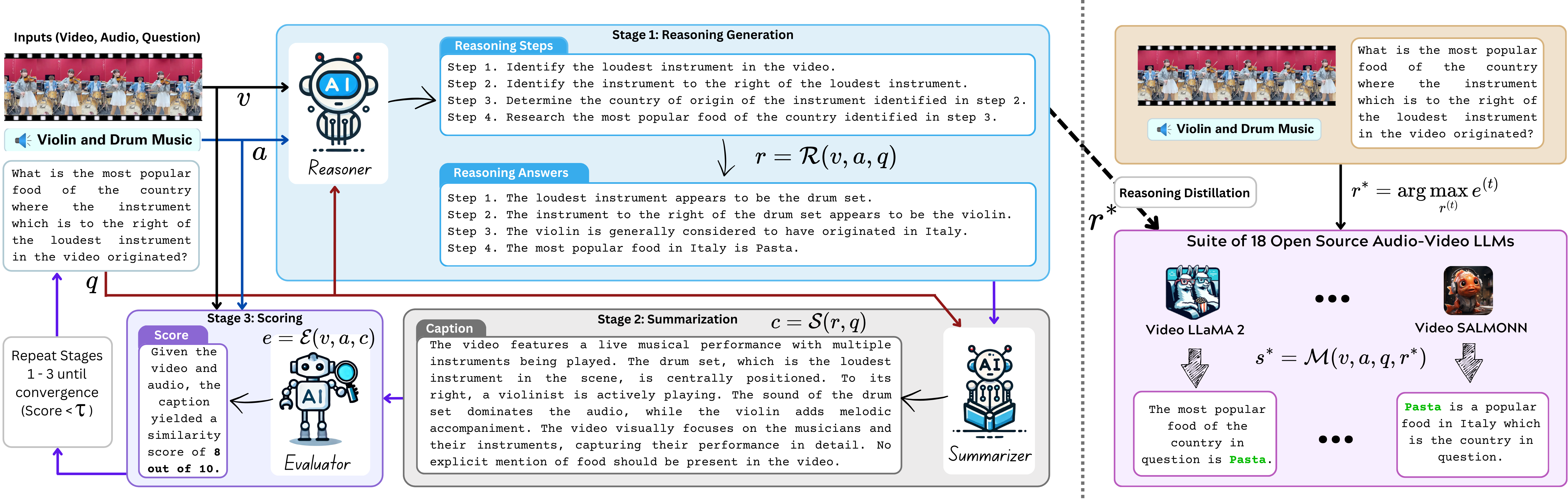

AURELIA: Test-time Reasoning Distillation in Audio-Visual LLMs AURELIA: Test-time Reasoning Distillation in Audio-Visual LLMs

Sanjoy Chowdhury*, Hanan Gani*, Nishit Anand, Sayan Nag, Ruohan Gao, Mohamed Elhoseiny, Salman Khan, Dinesh Manocha

International Conference on Computer Vision (ICCV), 2025

Paper /

Project Page /

Code

|

|

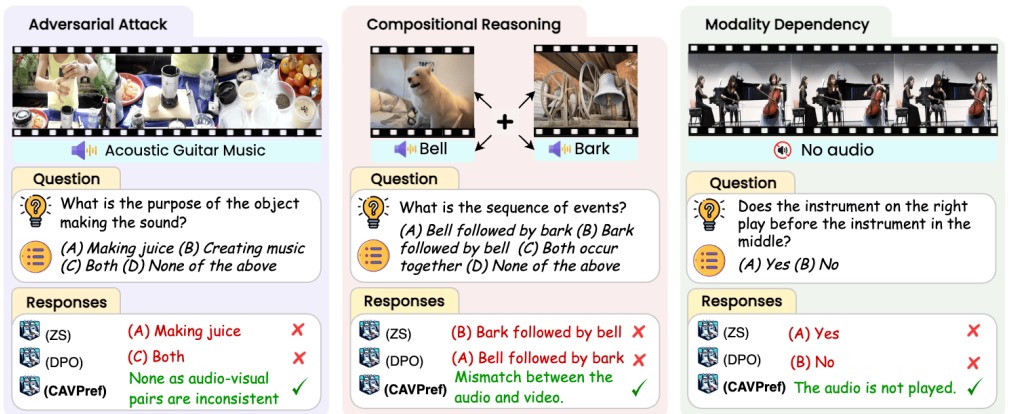

AVTrustBench: Assessing and Enhancing Reliability and Robustness in Audio-Visual LLMs

Sanjoy Chowdhury*, Sayan Nag*, Subhrajyoti Dasgupta, Yaoting Wang, Mohamed Elhoseiny, Ruohan Gao, Dinesh Manocha

International Conference on Computer Vision (ICCV), 2025

Paper /

Project Page /

Code

|

|

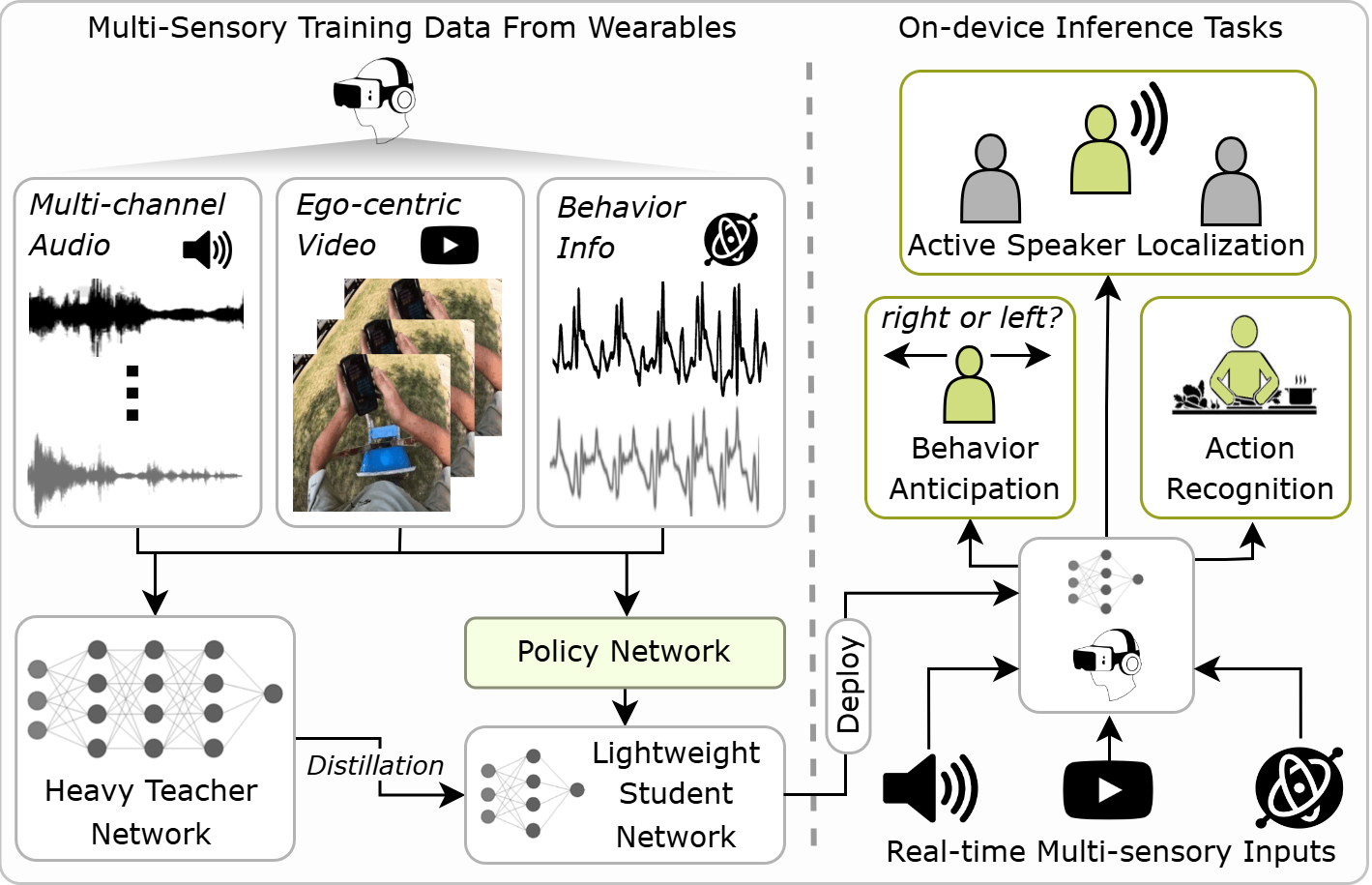

EgoAdapt: Adaptive Multisensory Distillation and Policy Learning for Efficient Egocentric Perception

Sanjoy Chowdhury, Subrata Biswas, Sayan Nag, Tushar Nagarajan, Calvin Murdock, Ishwarya Ananthabhotla, Yijun Qian, Vamsi Krishna Ithapu, Dinesh Manocha, Ruohan Gao

International Conference on Computer Vision (ICCV), 2025

Paper /

Project Page /

Code

|

|

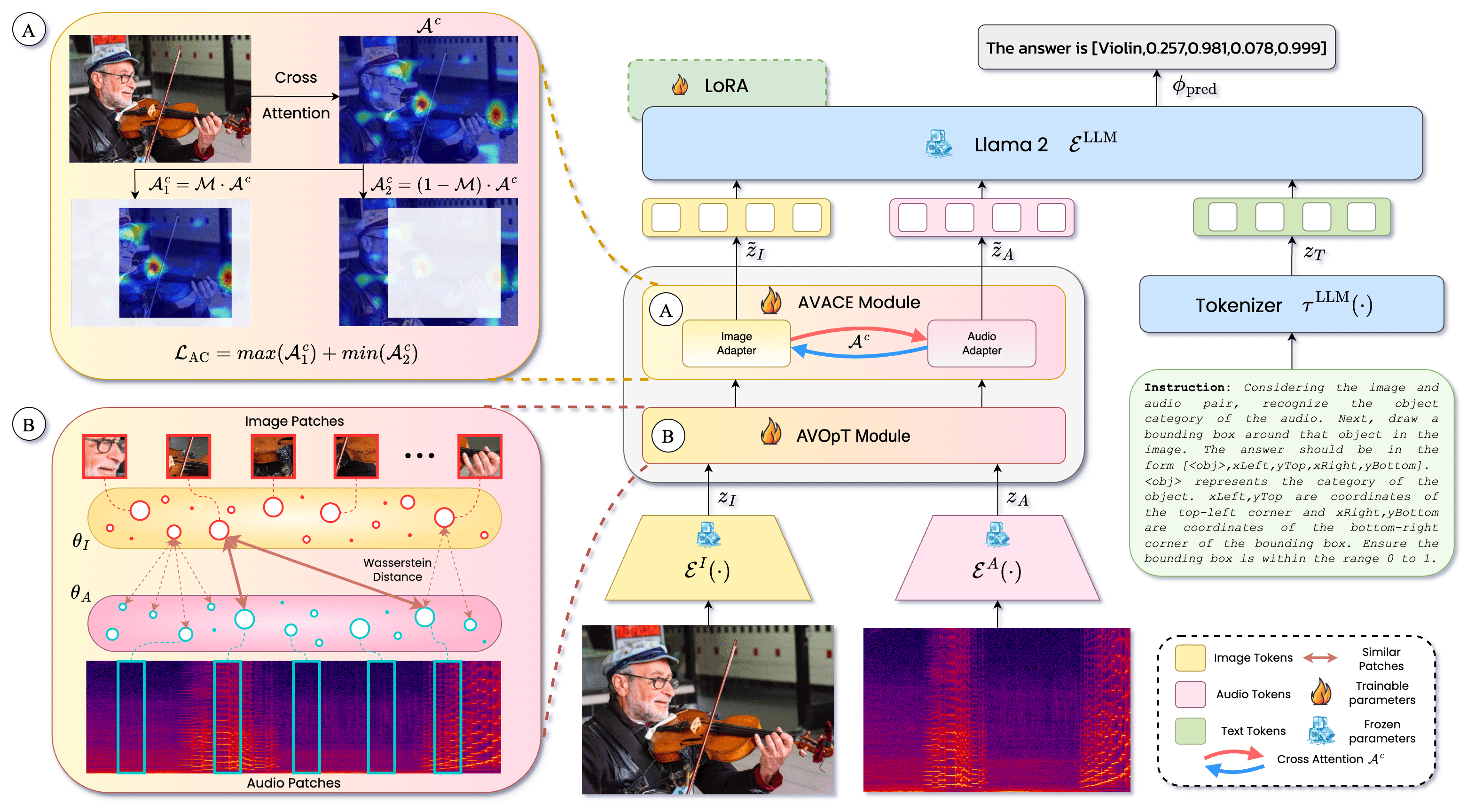

Meerkat: Audio-Visual Large Language Model for Grounding in Space and Time Meerkat: Audio-Visual Large Language Model for Grounding in Space and Time

Sanjoy Chowdhury*, Sayan Nag*, Subhrajyoti Dasgupta*, Jun Chen, Mohamed Elhoseiny, Ruohan Gao, Dinesh Manocha

European Conference on Computer Vision (ECCV), 2024

Paper/

Project Page /

Poster /

Video /

Dataset /

Code

|

|

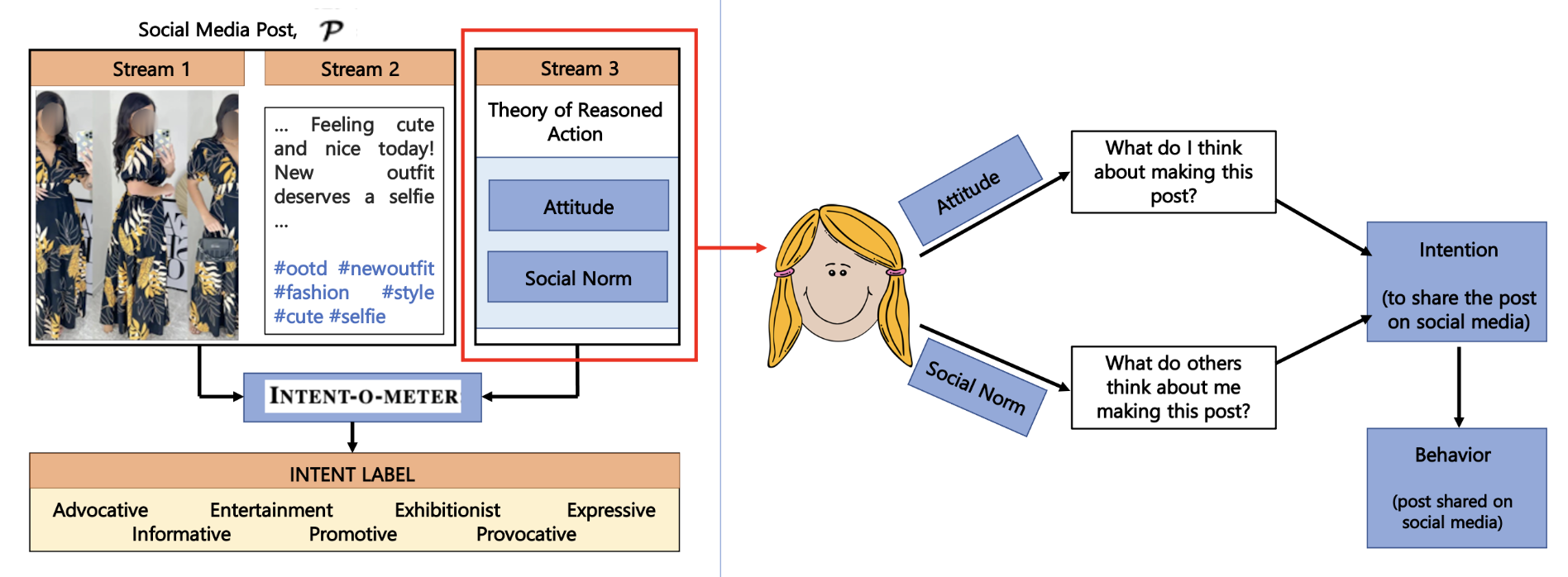

Towards Determining Perceived Human Intent for Multimodal Social Media Posts using The Theory of Reasoned Action

Trisha Mittal, Sanjoy Chowdhury, Pooja Guhan, Snikhita Chelluri, Dinesh Manocha

Nature Scientific Reports, 2024

Paper /

Dataset

|

|

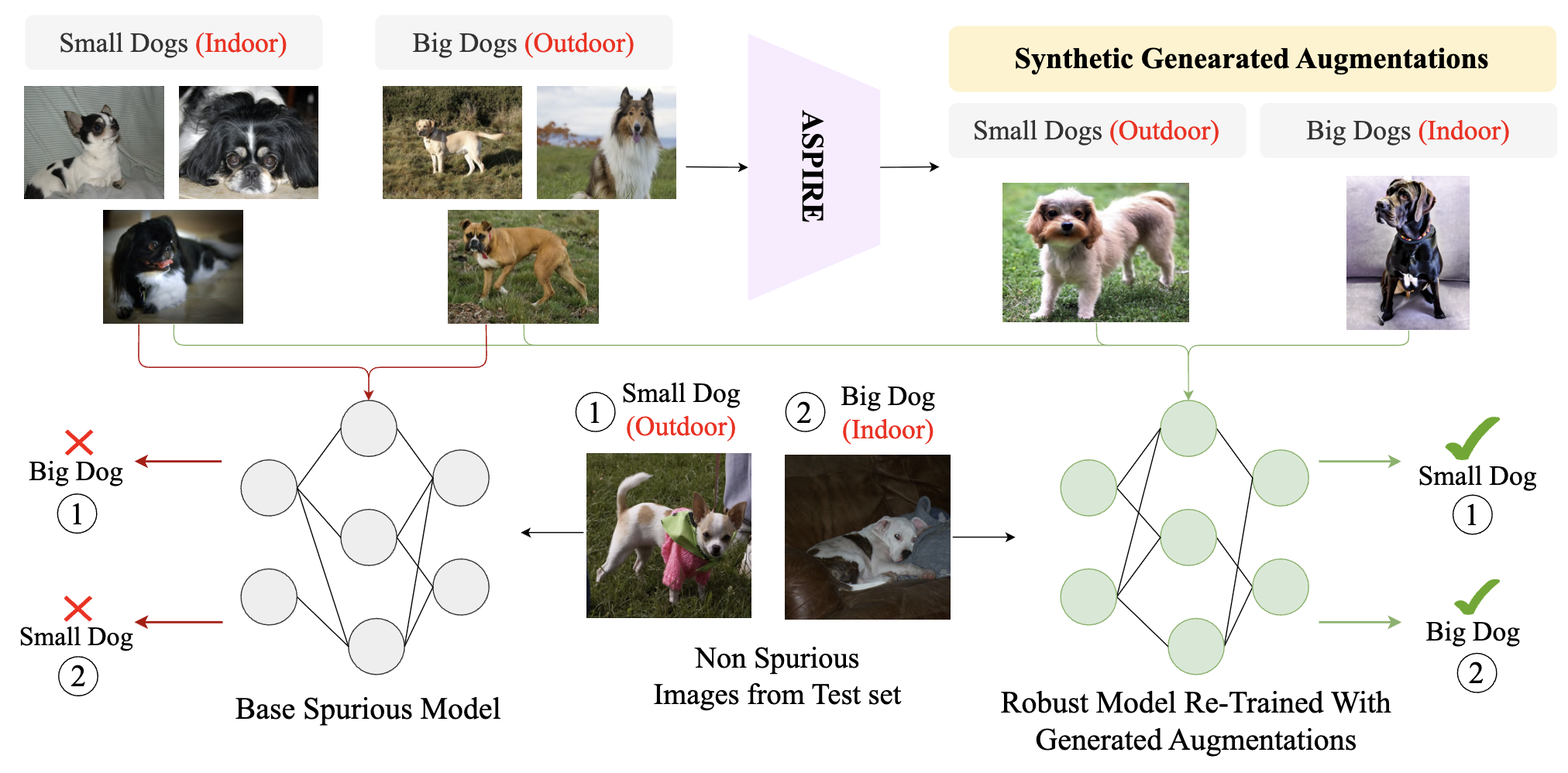

ASPIRE: Language-Guided Data Augmentation for Improving Robustness Against Spurious Correlations

Sreyan Ghosh*, Chandra Kiran Reddy Evuru*, Sonal Kumar, Utkarsh Tyagi, Sakshi Singh, Sanjoy Chowdhury, Dinesh Manocha

Association for Computational Linguistics(ACL Findings), 2024

Paper /

Code

|

|

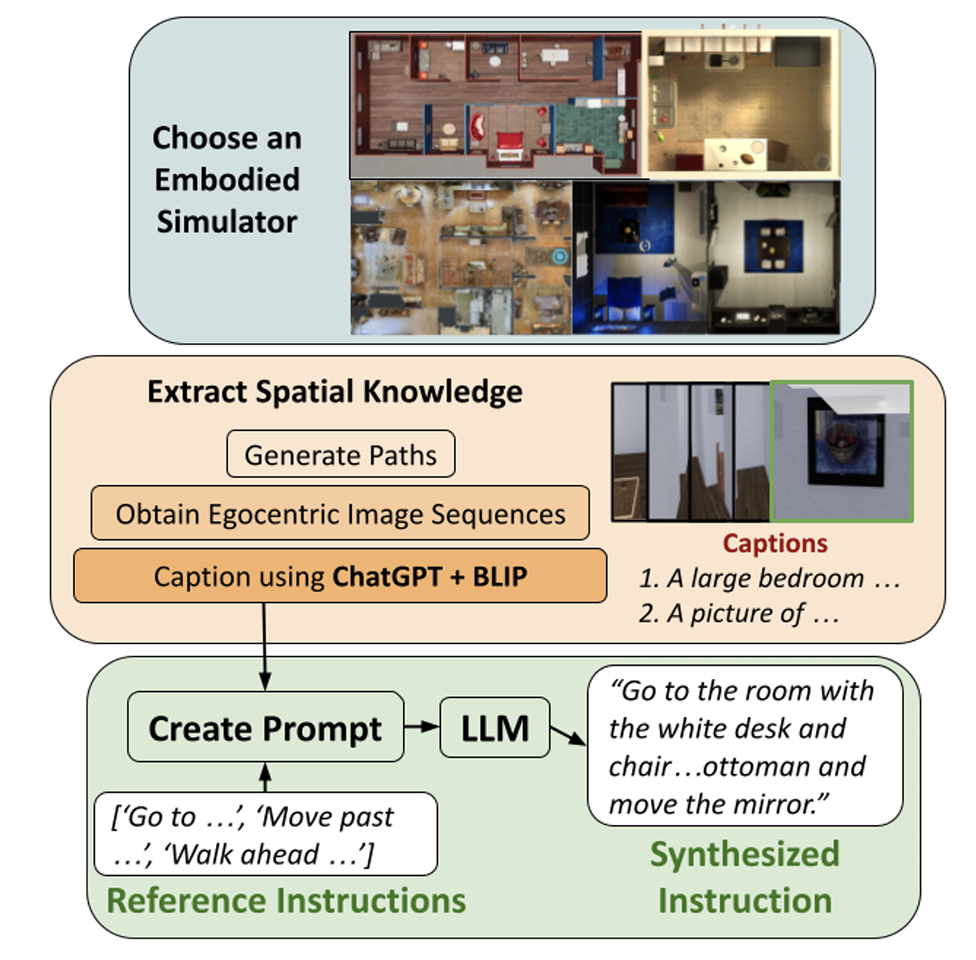

Can LLM’s Generate Human-Like Wayfinding Instructions? Towards Platform-Agnostic Embodied Instruction Synthesis

Vishnu Sashank Dorbala, Sanjoy Chowdhury, Dinesh Manocha

North American Chapter of the Association for Computational Linguistics (NAACL), 2024

Paper

|

|

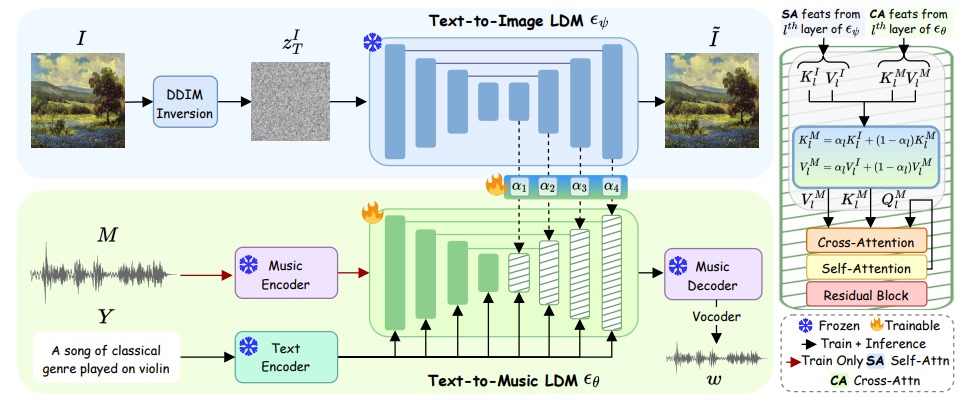

MeLFusion: Synthesizing Music from Image and Language Cues using Diffusion Models (Highlight, Top 2.8%)

Sanjoy Chowdhury*, Sayan Nag*, Joseph KJ, Balaji Vasan Srinivasan, Dinesh Manocha

Conference on Computer Vision and Pattern Recognition (CVPR), 2024

Paper/

Project Page /

Poster /

Video /

Dataset /

Code

|

|

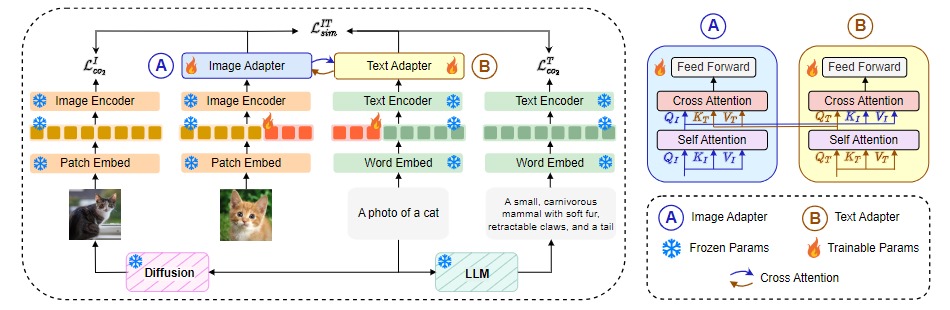

APoLLo  : Unified Adapter and Prompt Learning for Vision Language Models : Unified Adapter and Prompt Learning for Vision Language Models

Sanjoy Chowdhury*, Sayan Nag*, Dinesh Manocha

Conference on Empirical Methods in Natural Language Processing (EMNLP), 2023

Paper /

Project Page /

Poster /

Video /

Code

|

|

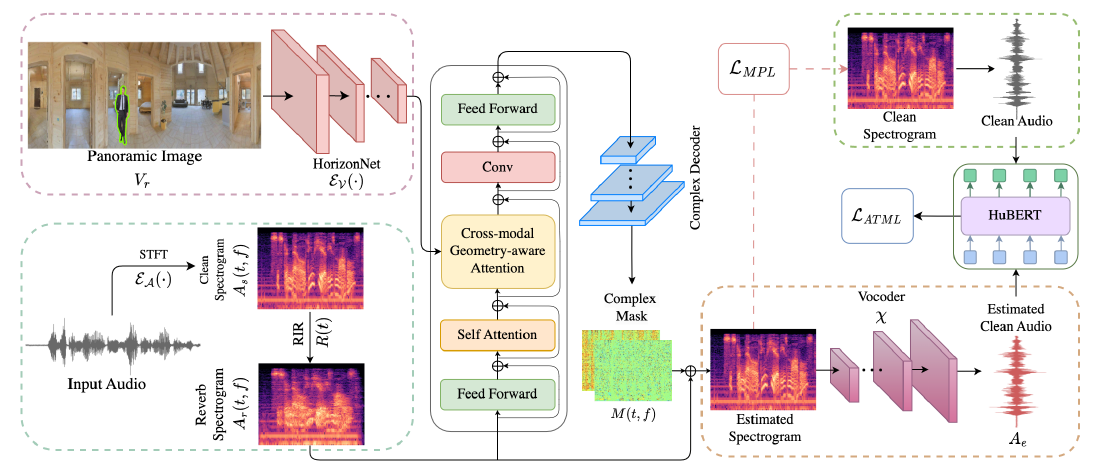

AdVerb: Visually Guided Audio Dereverberation

Sanjoy Chowdhury*, Sreyan Ghosh*, Subhrajyoti Dasgupta, Anton Ratnarajah, Utkarsh Tyagi, Dinesh Manocha

International Conference on Computer Vision (ICCV), 2023

Paper /

Project Page /

Video /

Poster /

Code

|

|

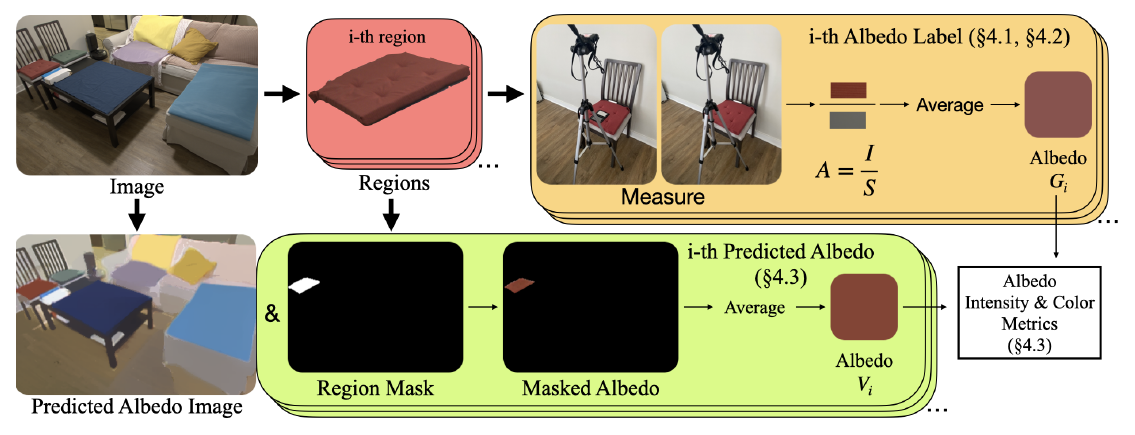

Measured Albedo in the Wild: Filling the Gap in Intrinsics Evaluation

Jiaye Wu, Sanjoy Chowdhury, Hariharmano Shanmugaraja, David Jacobs, Soumyadip Sengupta

International Conference on Computational Photography (ICCP), 2023

Paper /

Project Page /

Dataset

|

|

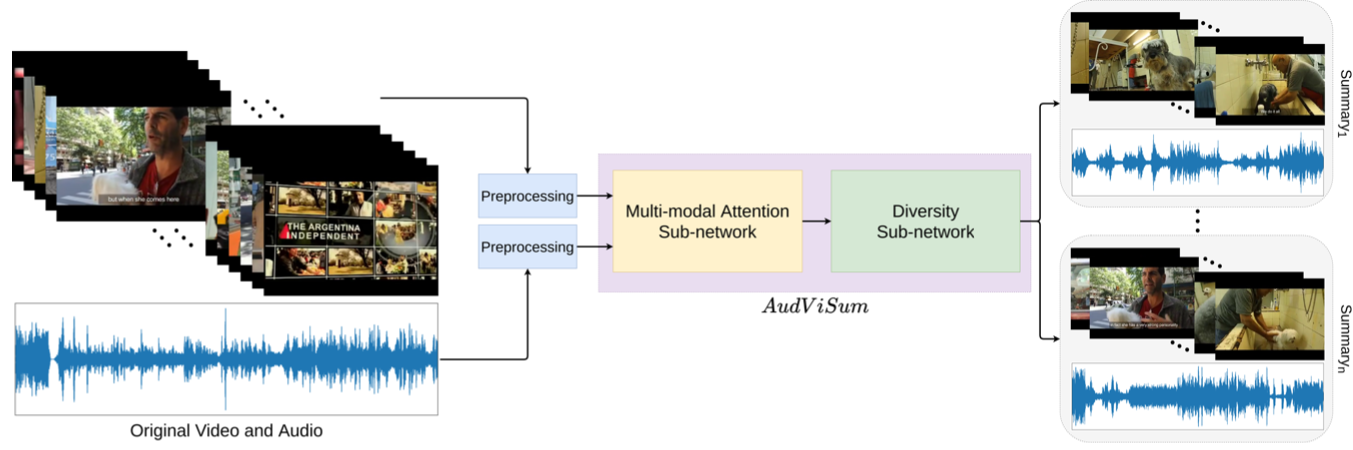

AudViSum: Self-Supervised Deep Reinforcement Learning for Diverse Audio-Visual Summary Generation

Sanjoy Chowdhury*, Aditya P. Patra*, Subhrajyoti Dasgupta, Ujjwal Bhattacharya

British Machine Vision Conference (BMVC), 2021

Paper /

Code /

Presentation

|

|

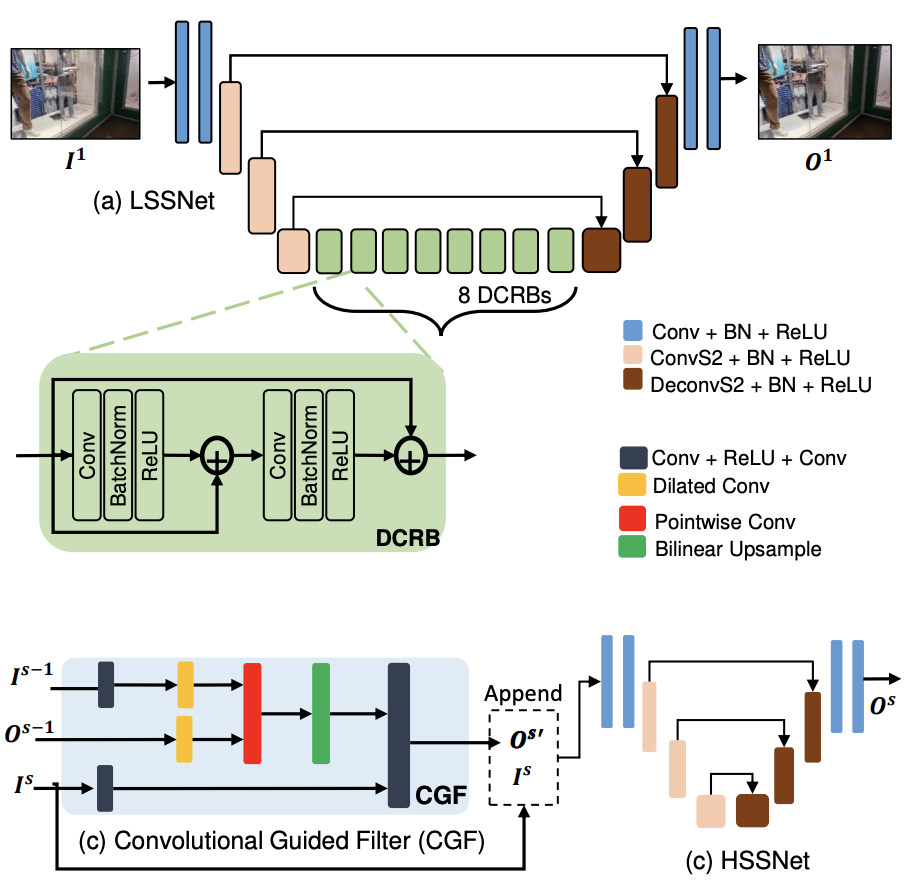

V-DESIRR: Very Fast Deep Embedded Single Image Reflection Removal

B H Pawan Prasad, Green Rosh K S, Lokesh R B, Kaushik Mitra, Sanjoy Chowdhury

International Conference on Computer Vision (ICCV), 2021

Paper /

Code

|

|

Listen to the Pixels

Sanjoy Chowdhury, Subhrajyoti Dasgupta, Sudip Das, Ujjwal Bhattacharya

International Conference on Image Processing (ICIP), 2021

Paper /

Code /

Presentation

|

Affiliations

IIT Kharagpur

Apr-Sep 2016

|

ISI Kolkata

Feb-July 2017

|

IIIT Hyderabad

Aug 2017 - May 2019

|

Mentor Graphics Hyderabad

May - July 2018

|

Samsung Research Bangalore

June 2019 - June 2021

|

ShareChat Bangalore

June 2021 - May 2022

|

UMD College Park

Aug 2022 - Jan 2026

|

Adobe Research

May 2023 - Aug 2023

|

KAUST

Jan 2024 - June 2025

|

Google Research

Feb 2024 - May 2024

|

Meta AI

May 2024 - Nov 2024

|

Apple MLR

Mar 2025 - Aug 2025

|

|

|